No compiler will ever decide every safety question about your program. Alan Turing proved it in 1936, and every language picks its own way to live with that limit. By the end of this post, you will know why the limit exists, and how Static Analysis can help to build your safety case.

A junior colleague once asked me a great question. “If the compiler is so smart, why does it not just tell me whether my code crashes?” I gave the only answer: because mathematics says it cannot. He looked at me as if I had just claimed the earth was flat. Then I drew a small diagram on the whiteboard, and ten minutes later he said the magic words: “So every safety claim from any tool is, at best, a partial answer.” Yes. And that is exactly the lens I want to give you today.

The Halting Problem in one paragraph

A while ago we talked about the notion of undecidability, as in “properties that are hard to verify”. Let’s refine that now.

In 1936, Alan Turing asked a simple question: can we build a procedure that, given any program and any input, decides whether the program eventually stops? He proved the answer is no. Not “no with current technology”, but no, ever, by contradiction. Assume such a decider exists. Then you can build a small program that asks the decider about itself and does the opposite of what it predicts. The decider must be wrong about that program. Therefore the decider cannot exist, and the halting question is undecidable: no algorithm can answer it for every program.

That is the Halting Problem. It looks like a curiosity about loops, but it is much bigger. Rice’s theorem extends the result to every non-trivial semantic property of programs. “Does this program ever crash?” Undecidable. “Does it ever divide by zero?” Undecidable. “Does it leak memory?” Undecidable. In general, anyway. Specific cases are often easy; the general case is impossible.

No algorithm can precisely decide every safety question about every program. This is a mathematical fact, not a tooling gap.

Daily-life analogy: German bureaucracy

Imagine you submit an application to a German authority. They love bureaucracy and forms. Now your form refers to a second form, which refers to a third, which refers to a regulation, which refers back to your original form under certain conditions. You ask a clerk: “Will my paperwork ever be complete?” In some cases, yes, the clerk can see a clear path to the end. In other cases, no, there is an obvious cycle. But for many real cases the only way to know is to run the process and see. Looking at the forms alone is not enough.

That is similar to what a compiler or a static analyzer faces. It reads your source, it follows references, it reasons about cycles and conditions, and at some point the reasoning gets stuck. The forms could lead anywhere. The analyzer must decide: report uncertainty, assume the best, or refuse to analyze further. That choice has real consequences for what bugs get reported downstream, as explored in how error absorption works.

A compiler reads code the way a clerk reads forms. Some questions resolve quickly. Others resolve never.

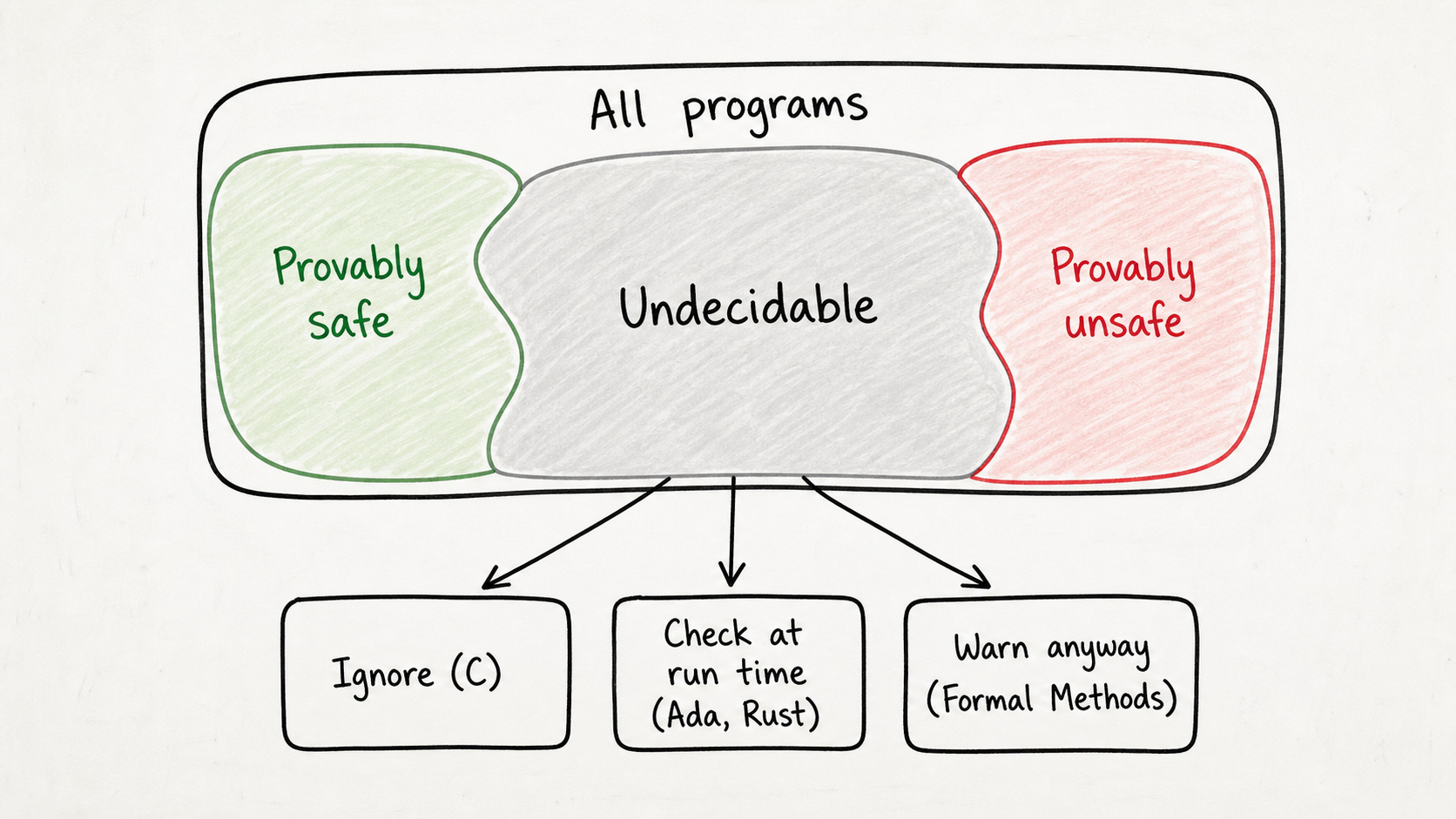

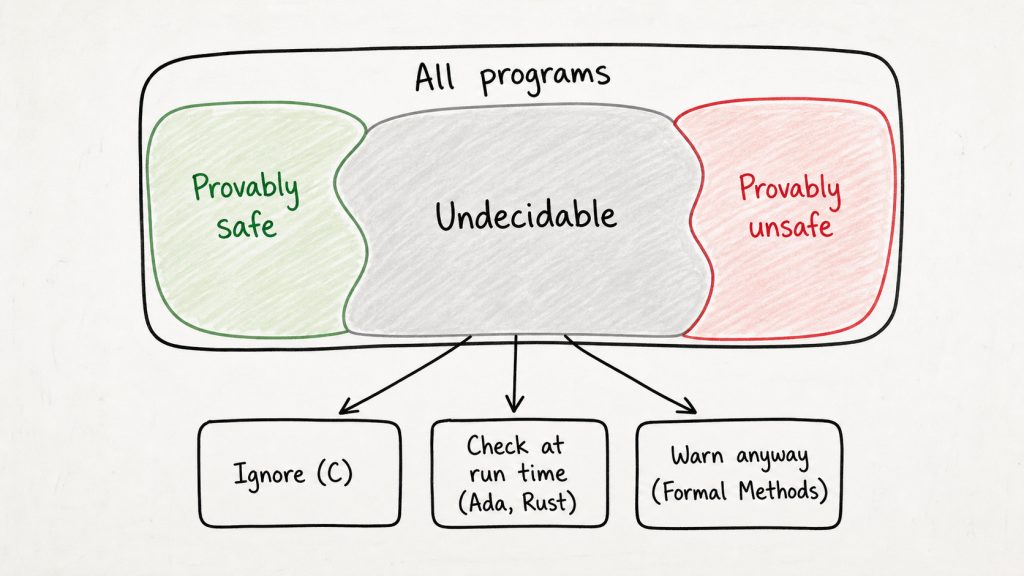

What this means for compilers

Every compiler faces the same undecidable corners, and each language has picked a different reaction. C and C++ are the clerk who stamps every form and waves you through: emit code that mirrors the source and trust the programmer. Divide by zero? The standard calls it undefined behavior and walks away. Read past the end of a buffer? Same. The compiler does not crash, the program does, sometimes years later, in production.

int divide(int a, int b) {

return a / b; // Compiler: "Looks fine to me."

}The C compiler often has no idea whether b is ever zero. It cannot know in general. So it shrugs, emits a divide instruction, and ships. If b is zero at runtime, the CPU raises an exception and your program is gone.

Ada and Rust split the same problem in two. Their compilers prove what they can statically: Rust’s borrow checker rejects unsafe aliasing at compile time, Ada’s strong typing rejects mismatched ranges before the binary is even produced.

For the cases that are quickly decidable, you get a hard error and the code does not compile. Where the compiler cannot decide, the language inserts a run-time check: range checks on assignments in Ada, array-bounds and overflow checks in Rust. When a check fires, the program raises an exception or panics at run time, instead of corrupting memory. An undecidable case becomes a controlled failure rather than a silent disaster, and I have written about why memory safety in Rust is less safe than the marketing suggests precisely because of the run-time half of that bargain.

Even the best compilers for the safest languages can only find some safety issues during compilation, and turn the undecidable issues into run-time checks.

What this means for Static Analysis

Static Analysis tools go deeper than compilers. They look across the whole program, not just a single translation unit, and they are allowed to run longer and can afford more powerful algorithms. That extra scope and budget lets them decide many cases a compiler would have to skip. The practical payoff is that they can guarantee safety properties even on “lazy” languages like C, where the compiler itself has given up.

But Static Analysis does not lift the Turing limit, only pushes it back. It lives in the same trap, and it has exactly two ways out of uncertainty:

- Either it accepts false negatives and silently misses some real bugs.

- Or it accepts false positives and warns about code that is in fact correct.

There is no third door. You cannot have a static analyzer or compiler that has zero false positives and zero false negatives at the same time. Turing closed that door. Hence, every tool must decide for one of the two paths.

Most commercial analyzers pick the first path: they stay quiet when uncertain, so the report stays short and the developers stay happy. The cost is that some bugs slip through, even though the tool ran cleanly. Your typical linter falls into this category.

Only a few advanced tools pick the second path: they use Formal Methods to never miss a bug. But the price is that they must raise a warning in case of undecidability. This results in false positives, but also in a stronger guarantee: if the tool says nothing, you are safe. That is the trade-off which has been circling for decades, and the Halting Problem is the reason it will never go away.

Every static analyzer either misses real bugs or flags non-bugs. There is no tool that does neither.

Do’s and Don’ts

- ✅ Do assume every safety question your tool answers comes with an I don’t know category, even if the report does not show one.

- ✅ Do layer techniques: a strongly typed language, plus static analysis, plus run-time checks, plus formal proof when there is no room for surprises.

- ⚠️ Don’t trust any tool that promises to find all bugs and zero false positives at the same time. That combination contradicts a 1936 theorem; one of the two claims has to give.

- ⚠️ Don’t mistake the absence of warnings for the presence of safety. A silent analyzer might just be the kind that misses bugs, and a silent compiler is silent because it had to be.

- ⚠️ Don’t expect your compiler to prove that your program is safe. That is not its job, and it is not within its power.

Summary

The Halting Problem is not an academic footnote. It is the reason every compiler, every analyzer, and every type system has gaps, and the reason why compilers cannot guarantee safety. Every language designer has to pick a strategy for those gaps, and the clerk in our German-bureaucracy analogy lives inside every one of them. Each strategy has a cost, and each is useful as long as the vendor is honest about which trade-off they picked.

If you write code, enable run-time checks where your language offers them, and run static analysis on top to catch what the language alone misses. If you talk to teams, share this post when someone claims their tool catches everything, because the conversation needs that grounding. If you evaluate tools for a project, ask vendors which failure mode they accept: false negatives or false positives, because they cannot avoid both.